Command-line tool to find and list/delete duplicate files. #List duplicate files #Delete duplicate files #Find duplicate files #Duplicate #Finder #Lister

fdupes is a simple software for identifying or deleting duplicate files residing within specified directories.

fdupes can also delete the found duplicate files if instructed. fdupes can follow Symlinks and can be instructed to ignore hardlinks. fdupes can also show the size of the duplicate files.

fdupes is a simple and very efficient tool, easy to use.

To install the program, issue the following commands:

make fdupes su root make install

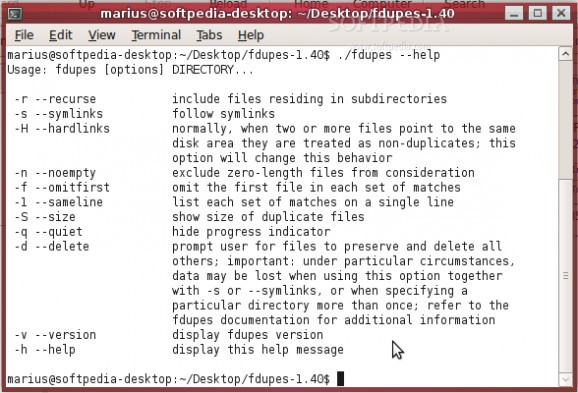

Usage: fdupes [options] DIRECTORY...

-r --recurse include files residing in subdirectories -s --symlinks follow symlinks -H --hardlinks normally, when two or more files point to the same disk area they are treated as non-duplicates; this option will change this behavior -n --noempty exclude zero-length files from consideration -f --omitfirst omit the first file in each set of matches -1 --sameline list each set of matches on a single line -S --size show size of duplicate files -q --quiet hide progress indicator -d --delete prompt user for files to preserve and delete all others; important: under particular circumstances, data may be lost when using this option together with -s or --symlinks, or when specifying a particular directory more than once; refer to the fdupes documentation for additional information -v --version display fdupes version -h --help display this help message

Unless -1 or --sameline is specified, duplicate files are listed together in groups, each file displayed on a separate line. The groups are then separated from each other by blank lines.

When -1 or --sameline is specified, spaces and backslash characters () appearing in a filename are preceded by a backslash character. For instance, "with spaces" becomes "with spaces".

When using -d or --delete, care should be taken to insure against accidental data loss. While no information will be immediately lost, using this option together with -s or --symlink can lead to confusing information being presented to the user when prompted for files to preserve. Specifically, a user could accidentally preserve a symlink while deleting the file it points to. A similar problem arises when specifying a particular directory more than once. All files within that directory will be listed as their own duplicates, leading to data loss should a user preserve a file without its "duplicate" (the file itself!).

fdupes 1.40

add to watchlist add to download basket send us an update REPORT- runs on:

- Linux

- filename:

- fdupes-1.40.tar.gz

- main category:

- Utilities

- developer:

- visit homepage

calibre 7.9.0

7-Zip 23.01 / 24.04 Beta

ShareX 16.0.1

Bitdefender Antivirus Free 27.0.35.146

Microsoft Teams 24060.3102.2733.5911 Home / 1.7.00.7956 Work

Zoom Client 6.0.3.37634

Windows Sandbox Launcher 1.0.0

Context Menu Manager 3.3.3.1

4k Video Downloader 1.5.3.0080 Plus / 4.30.0.5655

IrfanView 4.67

- Context Menu Manager

- 4k Video Downloader

- IrfanView

- calibre

- 7-Zip

- ShareX

- Bitdefender Antivirus Free

- Microsoft Teams

- Zoom Client

- Windows Sandbox Launcher